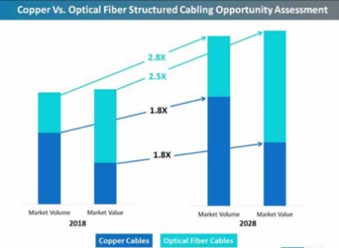

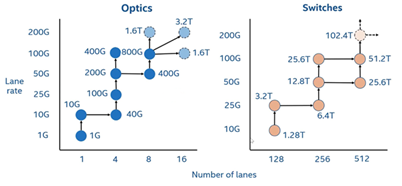

When it comes to data center (DC) cabling, much has been written about the protracted battle between fiber and copper for supremacy in the access layer. Regardless of which side you’re on, there is no denying the accelerating changes affecting your cabling decision. Data center lane speeds have quickly increased from 40 Gbps to 100 GBps—and even reaching 400 GBps in larger enterprise and cloud-based data centers. Driven by more powerful ASICs, switches have become the workhorses of the data center, such that network managers must decide how to break out and deliver higher data capacities, from the switches to the servers, in the most effective way possible. About the only thing that hasn’t changed all that much is the relentless focus on reducing power consumption (the horse has gotta eat).

In this blog, we take a look at how data center trends and changes are shifting the balance between copper and fiber and what it can mean for today’s larger enterprise and cloud-based facilities.

Copper’s future in the data center network?

Today, optical fiber is used throughout the DC network—with the exception being the in-cabinet switch-to-server connections in the equipment distribution areas (EDAs). Within a cabinet, copper continues to thrive, for the moment. Often considered inexpensive and reliable, copper is a good fit for short top-of-rack switch connections and applications less than about 50 GBps. It may be time to move on, however.

Copper’s demise in the data center has been long and predicted. Its useful distances continue to shrink, and increased complexity makes it difficult for copper cables to compete against a continuous improvement in fiber optic costs. Still, the old medium has managed to hang on. However, data center trends—and, more importantly, the demand for faster throughput and design flexibility—may finally signal the end of twisted-pair copper in the data center. Two of the biggest threats to copper’s survival are its distance limitation and rapidly increasing power requirements—in a world where power budgets are very critical.

Copper’s demise in the data center has been long and predicted. Its useful distances continue to shrink, and increased complexity makes it difficult for copper cables to compete against a continuous improvement in fiber optic costs. Still, the old medium has managed to hang on. However, data center trends—and, more importantly, the demand for faster throughput and design flexibility—may finally signal the end of twisted-pair copper in the data center. Two of the biggest threats to copper’s survival are its distance limitation and rapidly increasing power requirements—in a world where power budgets are very critical.

Signal loss over distance

As speeds increase, sending electrical signals over copper becomes much more complicated. The electrical transfer speeds are limited by ASIC capabilities, and much more power is required to reach even short distances. These issues impact the utility of even short-reach direct attached cables (DACs). The alternative fiber optic technologies become compelling for lower cost, power consumption and operational ease.

As switch capacity grows, copper’s distance issue becomes an obvious challenge. A single 1U network switch now supports multiple racks of servers—and, at the higher speeds required for today’s applications, copper is unable to span even these shorter distances. As a result, data centers are moving away from traditional top-of-rack designs and deploying more efficient middle-of-row or end-of-row switch deployments and structured cabling designs.

Power consumption

At speeds above 10G, twisted-pair copper (e.g., UTP/STP) deployments have virtually ceased due to design limitations. A copper link gets power from each end of the link to support the electrical signalling. Today’s 10G copper transceivers have a maximum power consumption of 3-5 watts[i]. While that’s about 0.5-1.0 W less than transceivers used for DACs (see below), it’s nearly 10X more power than multimode fiber transceivers. Factor in the cost offset the additional heat generated, the copper operating costs can easily be double that of fiber. The power differential for copper extends beyond the network cables—it applies equally to the copper traces inside the switch. From end to end, these energy losses add up.

While there are some in the data center who still advocate for copper, justifying its continued use is becoming an uphill battle that becomes too hard to fight. With this in mind let’s look at some alternatives.

Direct attached copper (DAC)

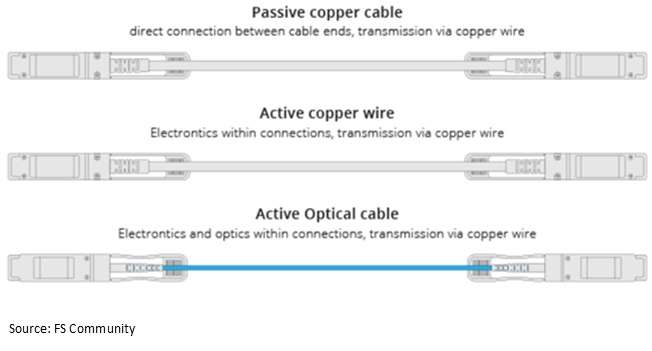

Copper DACs, both active and passive, have stepped in to link servers to switches. DAC cabling is a specialized form of twisted pair. The technology consists of shielded copper cable with plug-in, transceiver-like connectors on either end. Passive DACs make use of the host-supplied signals, whereas active DACs use internal electronics to boost and condition the electrical signals. This enables the cable to support higher speeds over longer distances, but it also consumes more power. Active and passive DACs are considered inexpensive. Still, as a copper-based medium, attenuation-over-distance limitations are significant obstacles to the technology’s future. Considering that new switches can replace several TOR switches (thus saving a lot of capital and power), DACs may not be the low-cost solution they appear to be on the surface.

Active optical cable (AOC) is a step up from both active and passive DAC, with bandwidth performance up to 400 Gbps. Plus, as a fiber medium, AOC is lighter and easier to handle than copper. However, the technology comes with some serious limitations. AOC cables must be ordered to length, then replaced each time the DC increases speeds or changes switching platforms. Each AOC comes as a complete assembly (as do DACs) and, thus—should any component fail—the entire assembly must be replaced. This is a drawback when compared to optical transceivers and structured cabling, which provides the most flexibility and operational ease.

Active optical cable (AOC) is a step up from both active and passive DAC, with bandwidth performance up to 400 Gbps. Plus, as a fiber medium, AOC is lighter and easier to handle than copper. However, the technology comes with some serious limitations. AOC cables must be ordered to length, then replaced each time the DC increases speeds or changes switching platforms. Each AOC comes as a complete assembly (as do DACs) and, thus—should any component fail—the entire assembly must be replaced. This is a drawback when compared to optical transceivers and structured cabling, which provides the most flexibility and operational ease.

Perhaps more importantly, both AOC and DAC cabling are point-to-point solutions. Therefore, they exist outside the structured cabling network—making scaling, management and overall operational efficiency more difficult. Secondly, as fixed-cabling solutions, AOCs and DACs cannot support the flexibility that data centers need to support higher-capacity server networks and implement new, more efficient server row designs. Plus, each time you increase speeds or change a switch, you’ve got to replace the AOCs and DACs.

Pluggable optics

Meanwhile, pluggable optical transceivers continue to improve to leverage the increased capabilities of switch technologies. In the past, a single transceiver that carried four lanes (a.k.a. quad small form factor pluggable [QSFP]), enabled DC managers to connect four servers to a single transceiver. This resulted in an estimated 30 percent reduction in network cost (and, arguably, 30 percent lower power consumption) than duplex port-based switches. Newer switches connecting eight servers per transceiver double down on cost and power savings. Better yet, when the flexibility of structured cabling is added, the overall design becomes scalable. To that end the IEEE has introduced the P802.3.cm standard and is working on P802.3.db, which seeks to define transceivers built for attaching servers to switches.

Co-packaged optics

Still in the early stages, co-packaged optics (CPOs) move the electrical-to-optical conversion engine closer to the ASIC—eliminating the copper trace in the switch. The idea is to eliminate the electrical losses associated with the short copper links within the switch to achieve higher bandwidth and lower power consumption. Proponents of the technology argue it is the best way to move to the next-generation platform and higher speeds while maintaining an affordable power budget. Their goal is to remove the last few inches of copper from the network link to achieve the all-optical, ASIC-to-ASIC efficiency DC networks will need. It is an ambitious objective that will require industry-wide collaboration and new standards to ensure interoperability.

Where we’re headed (sooner than we think)

The march toward 800G and 1.6T will continue; it must if the industry is to have any chance of delivering on customer expectations regarding bandwidth, latency, AI, IoT, virtualization and more. At the same time, data centers must have the flexibility to break out and distribute traffic from higher-capacity switches in ways that make sense—operationally and financially. This suggests more cabling and connectivity solutions that can support diverse fiber-to-the-server applications, such as all-fiber structured cabling.

Right now, based on the IEEE Ethernet roadmap, 16-fiber infrastructures can provide a clean path to those higher speeds while making efficient use of the available bandwidth. Structured cabling using the 16-fiber design also enables us to break out the capacity so a single switch can support 192 servers. In terms of latency and cost savings, the gains are significant. At CommScope, this is what we’re seeing and hearing from our large enterprise and cloud data center customers. Most of them have reached a tipping point and are going all in on fiber because they look at the hyper-scalers and realize what’s coming.

Right now, based on the IEEE Ethernet roadmap, 16-fiber infrastructures can provide a clean path to those higher speeds while making efficient use of the available bandwidth. Structured cabling using the 16-fiber design also enables us to break out the capacity so a single switch can support 192 servers. In terms of latency and cost savings, the gains are significant. At CommScope, this is what we’re seeing and hearing from our large enterprise and cloud data center customers. Most of them have reached a tipping point and are going all in on fiber because they look at the hyper-scalers and realize what’s coming.

The $64,000 question is: Has copper finally outlived its usefulness? The short answer is no. There will continue to be certain low-bandwidth, short-reach applications for smaller data centers where copper’s low price point outweighs its performance limitations. So too, there will likely be a role for CPOs and front-panel-pluggable transceivers. There simply is not a one-size-fits-all solution.

That being said, here’s a bit of advice for anyone wondering if it’s time to phase out copper and prepare to go all in on fiber. In the words of novelist Damon Runyan, “The race doesn’t always go to the swift or the battle to the strong, but it’s sure as hell the way to bet.”

[i] Data Center Reference Guide; FOA.org; https://www.thefoa.org/tech/ref/appln/datacenters.html