Editor’s Note: This is the seventh installment for our “Meet the RF Experts” series in which contributors to the Understanding the RF Path e-book elaborate on subjects in their areas of expertise.

Reliability is often defined as the probability that a product or service will work as needed for a certain time and under certain operating conditions. Like other performance features, reliability is “designed-in” to products to meet needs. But unlike other features, reliability describes future performance and how it changes over time and varies with use conditions. What is often not understood is that only past reliability can be measured. Future reliability must be predicted by considering issues like:

- Does a product or service work where and how it will be used?

- How often will it fail and, when it does, will it be repaired or replaced?

- How does it fail and what happens when it does?

- How long can it last until it has to be replaced?

The first of these questions can be answered with validation tests to show that specifications are met and 100% reliability is possible—at least at the start of operating life. The other issues deal with predicting reliability after that. Two approaches are used for this, often in combination. One considers the statistics of failure and the other the physics of failure.

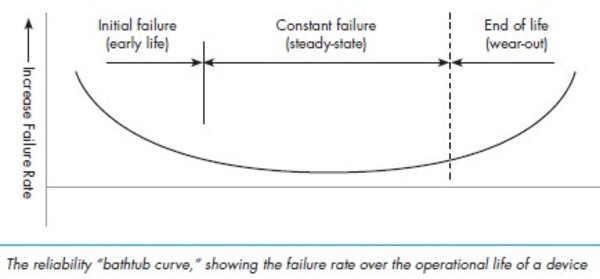

The statistics approach looks at past failure trends in large populations to predict future rate and mean time between failures (MTBF). The physics approach considers why specific failures happened and how to improve designs to reduce failure rates or extend useful life.

Predicting the annual failure rate of a new product like a remote radio head shows how these approaches are used together. The past reliability of electronics components is known so well that failure rates for each part in a system can be combined to predict a basic rate for the whole system. While doing this, each part’s failure rate is adjusted according to how it is used and physically stressed by temperature, voltage or other factors. In this way, the future failure rate for a product can be predicted, even before it exists.

We go into more detail about reliability in wireless systems in chapter 11 of the Understanding the RF Path e-book. Reliability is an important concept to understand because it impacts total cost of ownership and quality of service, among other network concerns. As a manufacturer of RF Path solutions, reliability is something we take seriously at CommScope.

Do you have any experience in predicting reliability for products or services? Do you have any best practices to share? I’d love to hear about them. Leave a comment if so.