In their search for bigger, faster pipes, network operators are currently focusing on two areas: improved optics and the increasing use of multiplexing. Of course, the two are not mutually exclusive. Many of the most attractive technologies involve both multiplexing and improved optics. We’ll get to that in just a moment, but for now, let’s focus on multiplexing.

CLICK TO TWEET: CommScope’s Jason Bautista explains how network operators should be focusing on multiplexing in their data center.

A closer look at wavelength division multiplexing

While a variety of analog and digital multiplexing technologies exist, the most commonly used approach in the data center is wavelength division multiplexing (WDM). WDM uses different wavelengths of light to create multiple data paths on the same fiber.

There are multiple ways to achieve WDM, the two most common being coarse wavelength division multiplexing (CWDM) and dense wavelength division multiplexing (DWDM). The functional difference between the two is the spacing between the various optical signals. CWDM channels are spaced 20 nanometers apart, compared with 0.4 nm spacing for DWDM. The wider spacing allows the use of low-cost, uncooled lasers for CWDM.

Within CWDM, emerging technologies include CLR4 and CWDM4. These technologies use four wavelengths to create four data channels (1270nm, 1290nm, 1310m and 1330nm). As typical with other WDM approaches, the use of CWDM gives data center operators the ability to support higher throughputs over duplex fiber optic connections.

Both CWDM and DWDM are used primarily in longer-range links (500m to 70km). A third WDM-based technology, which is rapidly gaining a following in the data center, is shortwave wavelength division multiplexing (SWDM). SWDM takes advantage of shorter wavelengths—850, 880, 910 and 940nm— spaced 30nm apart. For data center managers, SWDM is especially attractive because of its capacity and cost-effectiveness in short reach applications. (More on this in a moment.)

Within the data center, there are two primary areas where WDM is expected to play a larger role in addressing the capacity crunch. The first is in the interconnects coming into the data center. The requirements of current and emerging applications like 5G, IoT and M2M learning are driving the need for more high-speed connectivity between the data center and outside networks. WDM is being used to enlarge the pipes and meet the increased demand for capacity and speed.

The second area where WDM is expected to have a more prominent role is in boosting connectivity between network switches. As data centers move from the traditional three-tier topology to mesh-type leaf and spine designs, server port density becomes crucial. High speed fiber switch connections support more server traffic while saving more ports for server connections.

Using various WDM modulation schemes enables network managers to achieve the right balance of performance, density and cost. A bi-directional (BiDi) connection, for example, uses a duplex circuit to double link capacities. That doubling becomes important as speeds progress from 100G, 400G and up. Bidi’s speed-doubling enables higher speeds while maintaining the original duplex fiber links.

Combining multiplexing with advanced optics

As noted earlier, addressing the capacity crunch will most likely require a combination of advanced fiber optics as well as different modulation schemes. One example is the use of SWDM over OM5 multimode fiber. Whereas BiDi supports two different wavelengths for double the capacity, SWDM4 enables data centers to transmit four wavelengths, quadrupling capacity. SWDM4 provides higher capacity while using lower lane rates for example 100G BiDi must use 2 50G lanes whereas SWDM4 achieves the same capacity with 4 lanes of 25G – lower lane rates often mean longer links can be supported. The OM5 MMF, meanwhile, is specifically designed to support longer wavelengths. Better support for longer wavelengths enables support for longer links as well. For example, the newest BiDi application is a 400G link using four BiDi channels to support 100m on OM4 and 150m on OM5.

DC operators use multimode optics and WDM, to achieve 100G connections using inexpensive VCSEL based optics that also require much less power than their single mode equivalents.

Spatial division of multiplexing

Until now, WDM has been the technology of choice in the data center, due in part to the fact that it is among the most mature of the multiplexing solutions. Research into spatial division multiplexing (SDM) may provide an answer that can extend the future potential bandwidth of optical systems. One type of SDM uses fibers with multiple cores (MCF). Each core then multiplies the overall capacity of the MCF

Currently, MCF is being considered for very high capacity trans-oceanic links. In the future, there may be opportunities to combine WDM and SDM to support increased lane capacity. According to a 2014 article in Nature Photonics, “by combining this approach (SDM) with wavelength division multiplexing with 50 wavelength carriers on a dense 50 GHz grid, a gross transmission throughput of 255 Tbit s−1 (net 200 Tbit s−1) over a 1 km fibre link is achieved.”

Beyond its current use in long-haul submarine communications, SDM may also have applications in smaller-scale regional networks. As with other forms of modulation, spatial division multiplexing is expected to be used in conjunction with other techniques, including wavelength division multiplexing, rather than as a replacement for them.

What are the data center decision drivers?

For data center managers, understanding the differences between the various multiplexing technologies is just one step in a much larger task that involves deciding how and where they should be used. Capacity and latency are primary drivers, but the overall cost, size and scope of the data center will have a significant impact as well.

For large hyperscaler data centers the link distances may mean that singlemode is the most cost-effective solution, adding multiplexing as need to balance fiber and transceiver costs. Enterprise data centers being typically smaller may be able to leverage more multimode infrastructure.

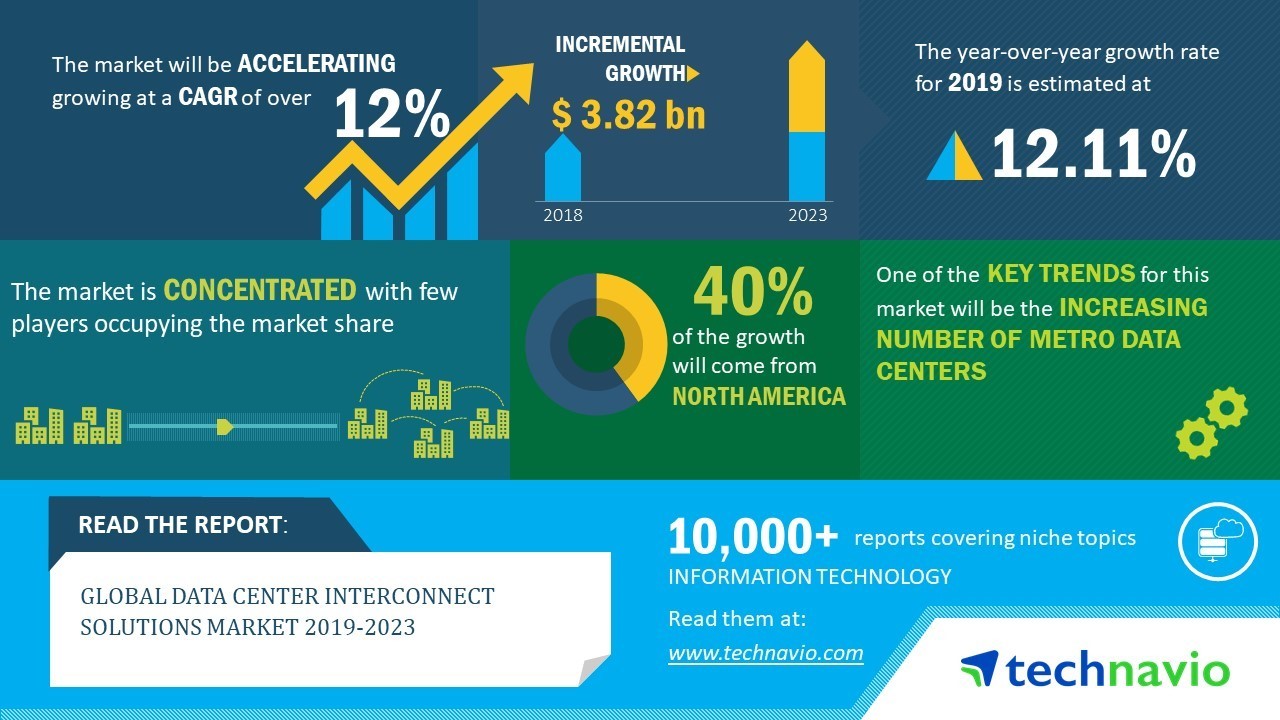

Global Data Center Interconnect Market Forecast: 2019-2023

Source: TechNavio